Update to Installing UniVerse on CentOS post

I’ve been asked a few times on how to get the U2 DBTools products, like XAdmin to connect to their VirtualBox machine. I obviously left something out in the article, so I’ve went back and updated the post for how to configure your firewall. You can follow these same steps for UniData as well.

Just to make sure you don’t miss it, I decided I should also duplicate it here…

Configure firewall

If you want to access UniVerse (say using XAdmin from the U2 DBTools package), you will need to modify your iptables configuration.

First, in my case I have the VirtualBox network adapter set to ‘Bridged’. Now, in a shell window update iptables ‘sudo vi /etc/sysconfig/iptables’

In vi, before any LOG or REJECT lines, add ‘-A INPUT -m state –state NEW -m tcp -p tcp –dport 31438 -j ACCEPT’.

Once that is done, you simple run ‘service iptables restart’ to pick up the changes.

The updated iptables file

XAdmin once connected

Installing UniVerse on CentOS 6.2

Previously we looked at installing UniData on a Linux machine. This time around we are going to install UniVerse on a CentOS 6.2. I’ve chosen CentOS as it is essentially a re-branded (de-branded?) version Red Hat Enterprise Linux. RHEL is officially supported by Rocket Software, making CentOS a great free OS for playing around with U2 databases.

As always, I suggest you do this in a Virtual Machine so that you can create as many dedicated test systems as your heart desires (or storage limits). For this I’ve used Oracle’s Virtual Box which is available for free.

Requirements

Okay, so to start, let’s make sure we have everything we need to do this:

- Suggested: Dual Core CPU or better (particularly if running as a VM)

- Suggested: 2GB RAM or better (particularly if running as a VM)

- Virtual Box software

- Latest CentOS LiveCD/LiveDVD ISO (as of 2012/06/07, version 6.2)

- UniVerse Personal Edition for Linux

Preparing the VM

After you have installed Virtual Box and have it running, we will need to create a new image to run CentOS. Doing this is as simple as clicking the ‘New’ button and follow the prompts.

Most questions can be left as is, except for the operating system. For the operating system, set it to ‘Linux’ with version ‘Red Hat’.

My old laptop has 2GB of RAM, so I’m assigning 1GB to this machine image.

I stick with dynamic allocation of my disks for most testing as it is easier to move the smaller images around. For more serious work, you might be better served creating a fixed disk size as it generally performs better.

The default 8GB disk is just fine. You can always create and add more disks later.

Now that you have your machine image ready, select the image and click on the settings button. In this screen click on the storage option and select the DVD drive from the IDE Controller. On the right-side there is a small CD/DVD image you can click on then select the option that let’s you choose a CD/DVD image. This will let you select the CentOS ISO you downloaded so we can boot from it.

While in the settings screen, you should also add a shared folder and click on the read-only and auto-mount checkbox options.

Installing CentOS

![CentOS-UniVerse [Running] CentOS-UniVerse [Running]](https://u2tech.files.wordpress.com/2016/07/centos-universe-running.png?w=595)

If you are not installing this as a virtual machine, you can burn the ISO image to CD/DVD and start the machine with the CD/DVD in the drive (or on modern machines, via USB drive). Only do this is you know what you are doing or are intending to have CentOS as the sole operating system. From here on in, I’ll be assuming you are taking the VM route.

Select the VM image and click on the start button.

CentOS should auto-boot from the CD/DVD image. Once it has loaded and is sitting at the desktop, there is an ‘Install to Hard Drive’ option. Click on this and follow the installation instructions CentOS provides you. Generally speaking, the default options are the ones you want.

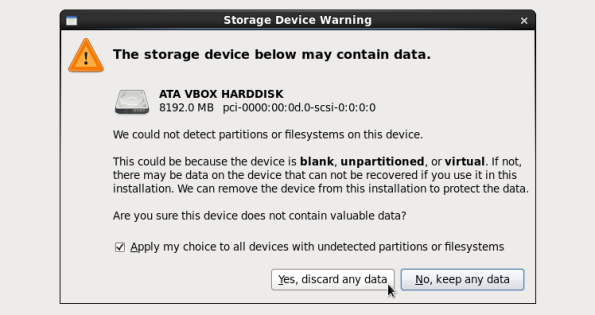

Early on in the installation CentOS will issue a ‘Storage Device Warning’. This is for your newly created 8GB disk. In this case you can select ‘Yes, discard any data’. Warning: If you are not doing this in a VM, you must know what you are doing or you risk losing data.

Where it asks you for hostname, you can leave it as the default. I’ve taken to naming them {OS}.{DB} in lowercase; so in this case I’m naming it ‘centos.universe’.

Once the installation is finished, you can restart the VM image. Be sure to remove the CD/DVD image so that it boots from the hard drive. It will ask you a few final questions once it restarts (such as entering a non-root user) before it takes you to the login prompt.

To make our life easier, once we have logged in, we will add ourselves to the list of allowed sudoers. To do this, open a terminal window by selecting Applications -> System Tools -> Terminal. I also added this shortcut to the desktop since I use it so much.

In the terminal, switch to the root user by running ‘su -‘. We can now edit the list of sudoers using visudo. At the end of the file, add ‘{user} ALL=(ALL) ALL’ where {user} is the username you created for yourself earlier.

Now is a good time to shut down and take a back-up of the image so you can clone as many freshly minted VM’s as you want. I also try to do some common tasks such as installing/updating gcc (terminal: ‘sudo yum install gcc’), installing Google Chrome (http://google.com/chrome) and ant (terminal: ‘sudo yum install ant’) first.

Installing UniVerse

Download UniVerse Personal Edition inside your VM image and place it into a temporary directory.

While you are waiting for it to download, you can create the ‘uvsql’ user we will require later. From the ‘System’ menu, select ‘Administration’ -> ‘Users and Groups’. Once you have the program up, click on the ‘Add User’ button, then fill in the username and password fields. Click ok and exit out of the user manager.

Open up a terminal window then change to your temporary directory where the UniVerse download is located. The first step will be to extract everything from the compressed file; to do this you can type in ‘unzip UVPE_RHLNXENTINT_11.1.9.zip’. Replace ‘UVPE_RHLNXENTINT_11.1.9.zip’ with whatever the downloaded filename is in your case.

The next step will be to extract the uv.load script to install UniVerse. To complete this step, run this command to extract it from the STARTUP archive: ‘cpio -ivcBdum uv.load < ./STARTUP'

You can now run uv.load as root with the following command: 'sudo ./uv.load'. Select 1 on the first prompt to install UniVerse with 'root' as the default owner. This is okay as we are just building a dev system.

On the next screen select option 4 to change the 'Install Media Path' to whatever the path of the temporary location you extracted UniVerse into. In my case it was '/home/itcmcgrath/temp'. The rest of the options are okay being left set to the defaults. Press [Enter] to continue with the installation process.

UniVerse will now be installed then put you into an administrative program. [Esc] out of this to drop to a UniVerse prompt then type in 'QUIT' to drop back to the command line.

There you have it, a working UniVerse server running in a Virtual Machine. Shutdown your VM and take a copy of the machine image so you have a fresh copy of UniVerse in easy reach.

Update: Configure firewall

If you want to access UniVerse (say using XAdmin from the U2 DBTools package), you will need to modify your iptables configuration.

First, in my case I have the VirtualBox network adapter set to ‘Bridged’. Now, in a shell windows update iptables ‘sudo vi /etc/sysconfig/iptables’

In vi, both any LOG or REJECT lines, add ‘-A INPUT -m state –state NEW -m tcp -p tcp –dport 31438 -j ACCEPT’.

Once that is done, you simple run ‘service iptables restart’ to pick up the changes.

The updated iptables file

XAdmin once connected

Disclaimer: This does not create a UniVerse server that will be appropriate to run as a production server.